Deploying serverless functions on AWS Lambda offers significant advantages, including scalability, cost-effectiveness, and reduced operational overhead. However, managing Lambda functions manually can become cumbersome, especially in complex deployments. This is where Infrastructure as Code (IaC) tools like Terraform shine. This guide will provide a comprehensive walkthrough of deploying AWS Lambda with Terraform, covering everything from basic setup to advanced configurations, enabling you to automate and streamline your serverless deployments.

Table of Contents

- 1 Understanding the Fundamentals: AWS Lambda and Terraform

- 2 Deploying Your First AWS Lambda Function with Terraform

- 3 Deploying AWS Lambda with Terraform: Advanced Configurations

- 4 Frequently Asked Questions

- 4.1 Q1: How do I handle sensitive information in my Lambda function?

- 4.2 Q2: What are the best practices for designing efficient Lambda functions?

- 4.3 Q3: How can I monitor the performance of my Lambda functions deployed with Terraform?

- 4.4 Q4: How do I update an existing Lambda function deployed with Terraform?

- 5 Conclusion

Understanding the Fundamentals: AWS Lambda and Terraform

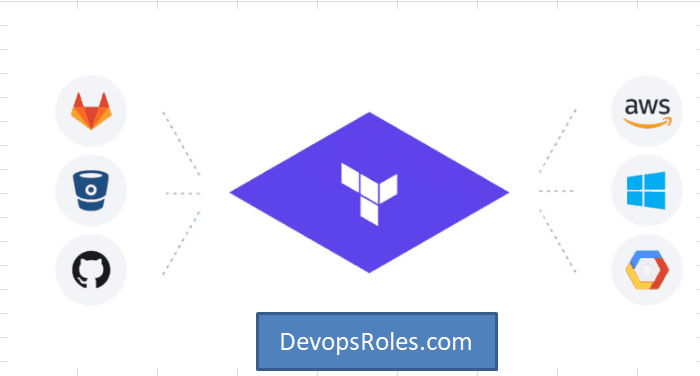

Before diving into the deployment process, let’s briefly review the core concepts of AWS Lambda and Terraform. AWS Lambda is a compute service that lets you run code without provisioning or managing servers. You upload your code, configure triggers, and Lambda handles the execution environment, scaling, and monitoring. Terraform is an IaC tool that allows you to define and provision infrastructure resources across multiple cloud providers, including AWS, using a declarative configuration language (HCL).

AWS Lambda Components

- Function Code: The actual code (e.g., Python, Node.js) that performs a specific task.

- Execution Role: An IAM role that grants the Lambda function the necessary permissions to access other AWS services.

- Triggers: Events that initiate the execution of the Lambda function (e.g., API Gateway, S3 events).

- Environment Variables: Configuration parameters passed to the function at runtime.

Terraform Core Concepts

- Providers: Plugins that interact with specific cloud providers (e.g., the AWS provider).

- Resources: Definitions of the infrastructure components you want to create (e.g., AWS Lambda function, IAM role).

- State: A file that tracks the current state of your infrastructure.

Deploying Your First AWS Lambda Function with Terraform

This section demonstrates a straightforward approach to deploying a simple “Hello World” Lambda function using Terraform. We will cover the necessary Terraform configuration, IAM role setup, and deployment steps.

Setting Up Your Environment

- Install Terraform: Download and install the appropriate Terraform binary for your operating system from the official website: https://www.terraform.io/downloads.html

- Configure AWS Credentials: Configure your AWS credentials using the AWS CLI or environment variables. Ensure you have the necessary permissions to create Lambda functions and IAM roles.

- Create a Terraform Project Directory: Create a new directory for your Terraform project.

Writing the Terraform Configuration

Create a file named main.tf in your project directory with the following code:

terraform {

required_providers {

aws = {

source = "hashicorp/aws"

version = "~> 4.0"

}

}

}

provider "aws" {

region = "us-east-1" // Replace with your desired region

}

resource "aws_iam_role" "lambda_role" {

name = "lambda_execution_role"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Action = "sts:AssumeRole"

Effect = "Allow"

Principal = {

Service = "lambda.amazonaws.com"

}

}

]

})

}

resource "aws_iam_role_policy" "lambda_policy" {

name = "lambda_policy"

role = aws_iam_role.lambda_role.id

policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Action = [

"logs:CreateLogGroup",

"logs:CreateLogStream",

"logs:PutLogEvents"

]

Effect = "Allow"

Resource = "*"

}

]

})

}

resource "aws_lambda_function" "hello_world" {

filename = "hello.zip"

function_name = "hello_world"

role = aws_iam_role.lambda_role.arn

handler = "index.handler"

runtime = "python3.9"

source_code_hash = filebase64sha256("hello.zip")

}Creating the Lambda Function Code

Create a file named hello.py with the following code:

import json

def handler(event, context):

return {

'statusCode': 200,

'body': json.dumps('Hello from AWS Lambda!')

}Zip the hello.py file into a file named hello.zip.

Deploying the Lambda Function

- Navigate to your project directory in the terminal.

- Run

terraform initto initialize the Terraform project. - Run

terraform planto preview the changes. - Run

terraform applyto deploy the Lambda function.

Deploying AWS Lambda with Terraform: Advanced Configurations

The previous example demonstrated a basic deployment. This section explores more advanced configurations for AWS Lambda with Terraform, enhancing functionality and resilience.

Implementing Environment Variables

You can manage environment variables within your Terraform configuration:

resource "aws_lambda_function" "hello_world" {

# ... other configurations ...

environment {

variables = {

MY_VARIABLE = "my_value"

}

}

}Using Layers for Dependencies

Lambda Layers allow you to package dependencies separately from your function code, improving organization and reusability:

resource "aws_lambda_layer_version" "my_layer" {

filename = "mylayer.zip"

layer_name = "my_layer"

compatible_runtimes = ["python3.9"]

source_code_hash = filebase64sha256("mylayer.zip")

}

resource "aws_lambda_function" "hello_world" {

# ... other configurations ...

layers = [aws_lambda_layer_version.my_layer.arn]

}Implementing Dead-Letter Queues (DLQs)

DLQs enhance error handling by capturing failed invocations for later analysis and processing:

resource "aws_sqs_queue" "dead_letter_queue" {

name = "my-lambda-dlq"

}

resource "aws_lambda_function" "hello_world" {

# ... other configurations ...

dead_letter_config {

target_arn = aws_sqs_queue.dead_letter_queue.arn

}

}Implementing Versioning and Aliases

Versioning enables rollback to previous versions and aliases simplify referencing specific versions of your Lambda function.

resource "aws_lambda_function" "hello_world" {

#...other configurations

}

resource "aws_lambda_alias" "prod" {

function_name = aws_lambda_function.hello_world.function_name

name = "prod"

function_version = aws_lambda_function.hello_world.version

}Frequently Asked Questions

Q1: How do I handle sensitive information in my Lambda function?

Avoid hardcoding sensitive information directly into your code. Use AWS Secrets Manager or environment variables managed through Terraform to securely store and access sensitive data.

Q2: What are the best practices for designing efficient Lambda functions?

Design functions to be short-lived and focused on a single task. Minimize external dependencies and optimize code for efficient execution. Leverage Lambda layers to manage common dependencies.

Q3: How can I monitor the performance of my Lambda functions deployed with Terraform?

Use CloudWatch metrics and logs to monitor function invocations, errors, and execution times. Terraform can also be used to create CloudWatch dashboards for centralized monitoring.

Q4: How do I update an existing Lambda function deployed with Terraform?

Modify your Terraform configuration, run terraform plan to review the changes, and then run terraform apply to update the infrastructure. Terraform will efficiently update only the necessary resources.

Conclusion

Deploying AWS Lambda with Terraform provides a robust and efficient way to manage your serverless infrastructure. This guide covered the foundational aspects of deploying simple functions to implementing advanced configurations. By leveraging Terraform’s IaC capabilities, you can automate your deployments, improve consistency, and reduce the risk of manual errors. Remember to always follow best practices for security and monitoring to ensure the reliability and scalability of your serverless applications. Mastering AWS Lambda with Terraform is a crucial skill for any modern DevOps engineer or cloud architect.Thank you for reading the DevopsRoles page!