In my experience as a Senior Staff DevOps Engineer, I’ve often seen deployment friction halt brilliant ideas at the proof-of-concept stage. When the primary goal is validating a data product or ML model, speed is the most critical metric. This guide offers an expert-level strategy for achieving true Rapid Prototyping in GCP by integrating an elite toolset: Terraform for infrastructure-as-code, GitHub Actions for CI/CD, Docker for containerization, and Streamlit for the frontend application layer.

We’ll architect a highly automated, cost-optimized pipeline that enables a single developer to push a change to a Git branch and have a fully deployed, tested prototype running on Google Cloud Platform (GCP) minutes later. This methodology transforms your development lifecycle from weeks to hours.

The Foundational Stack for Rapid Prototyping in GCP

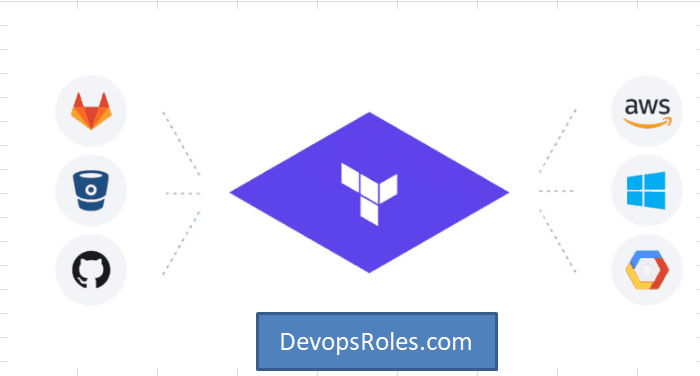

To truly master **Rapid Prototyping in GCP**, we must establish a robust, yet flexible, technology stack. Our chosen components prioritize automation, reproducibility, and minimal operational overhead:

- Infrastructure: Terraform – Define all GCP resources (VPC, Cloud Run, Artifact Registry) declaratively. This ensures the environment is reproducible and easily torn down after validation.

- Application Framework: Streamlit – Allows data scientists and ML engineers to create complex, interactive web applications using only Python, eliminating frontend complexity.

- Containerization: Docker – Standardizes the application environment, bundling all dependencies (Python versions, libraries) and ensuring the prototype runs identically from local machine to GCP.

- CI/CD & Source Control: GitHub & GitHub Actions – Provides the automated workflow for testing, building the Docker image, pushing it to Artifact Registry, and deploying the application to Cloud Run.

Pro-Tip: Choosing the GCP Target

For rapid prototyping of web-facing applications, **Google Cloud Run** is the superior choice over GKE or Compute Engine. It offers serverless container execution, scales down to zero (minimizing cost), and integrates seamlessly with container images from Artifact Registry.

Step 1: Defining Infrastructure with Terraform

Our infrastructure definition must be minimal but secure. We’ll set up a project, enable the necessary APIs, and define our key deployment targets: a **VPC network**, an **Artifact Registry** repository, and the **Cloud Run** service itself. The service will be made public for easy prototype sharing.

Required Terraform Code (main.tf Snippet):

resource "google_project_service" "apis" {

for_each = toset([

"cloudresourcemanager.googleapis.com",

"cloudrun.googleapis.com",

"artifactregistry.googleapis.com",

"iam.googleapis.com"

])

project = var.project_id

service = each.key

disable_on_destroy = false

}

resource "google_artifact_registry_repository" "repo" {

location = var.region

repository_id = var.repo_name

format = "DOCKER"

}

resource "google_cloud_run_v2_service" "prototype_app" {

name = var.service_name

location = var.region

template {

containers {

image = "${var.region}-docker.pkg.dev/${var.project_id}/${var.repo_name}/${var.image_name}:latest"

resources {

cpu_idle = true

memory = "1Gi"

}

}

}

traffic {

type = "TRAFFIC_TARGET_ALLOCATION_TYPE_LATEST"

percent = 100

}

// Allow unauthenticated access for rapid prototyping

// See: https://cloud.google.com/run/docs/authenticating/public

metadata {

annotations = {

"run.googleapis.com/ingress" = "all"

}

}

}

This code block uses the `latest` tag for true rapid iteration, though for production, a commit SHA tag is preferred. By keeping the service public, we streamline the sharing process, a critical part of **Rapid Prototyping GCP** solutions.

Step 2: Containerizing the Streamlit Application with Docker

The Streamlit application requires a minimal, multi-stage Dockerfile to keep image size small and build times fast.

Dockerfile Example:

# Stage 1: Builder

FROM python:3.10-slim as builder

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

# Stage 2: Production

FROM python:3.10-slim

WORKDIR /app

COPY --from=builder /usr/local/lib/python3.10/site-packages/ /usr/local/lib/python3.10/site-packages/

COPY --from=builder /usr/local/bin/ /usr/local/bin/

COPY . .

# Streamlit runs on port 8501 by default

EXPOSE 8501

# The command to run the application

CMD ["streamlit", "run", "app.py", "--server.port=8080", "--server.enableCORS=false"]

Note: We explicitly set the Streamlit port to **8080** via the `CMD` instruction, which is the mandatory listening port for Google Cloud Run’s container contract.

Step 3: Implementing CI/CD with GitHub Actions

The core of our **Rapid Prototyping GCP** pipeline is the CI/CD workflow, automated via GitHub Actions. A push to the `main` branch should trigger a container build, push, and deployment.

GitHub Actions Workflow (.github/workflows/deploy.yml):

name: Build and Deploy Prototype to Cloud Run

on:

push:

branches:

- main

workflow_dispatch:

env:

PROJECT_ID: ${{ secrets.GCP_PROJECT_ID }}

GCP_REGION: us-central1

SERVICE_NAME: streamlit-prototype

REPO_NAME: prototype-repo

IMAGE_NAME: streamlit-app

jobs:

deploy:

runs-on: ubuntu-latest

permissions:

contents: 'read'

id-token: 'write' # Required for OIDC authentication

steps:

- name: Checkout Code

uses: actions/checkout@v4

- id: 'auth'

name: 'Authenticate to GCP'

uses: 'google-github-actions/auth@v2'

with:

workload_identity_provider: ${{ secrets.WIF_PROVIDER }}

service_account: ${{ secrets.SA_EMAIL }}

- name: Set up Docker

uses: docker/setup-buildx-action@v3

- name: Build and Push Docker Image

uses: docker/build-push-action@v5

with:

push: true

tags: ${{ GCP_REGION }}-docker.pkg.dev/${{ PROJECT_ID }}/${{ REPO_NAME }}/${{ IMAGE_NAME }}:latest

context: .

- name: Deploy to Cloud Run

uses: google-github-actions/deploy-cloudrun@v2

with:

service: ${{ env.SERVICE_NAME }}

region: ${{ env.GCP_REGION }}

image: ${{ GCP_REGION }}-docker.pkg.dev/${{ PROJECT_ID }}/${{ REPO_NAME }}/${{ IMAGE_NAME }}:latest

Advanced Concept: GitHub OIDC Integration

We use **Workload Identity Federation (WIF)**, not static service account keys, for secure authentication. The GitHub Action uses the `id-token: ‘write’` permission to exchange a short-lived token for GCP credentials, significantly enhancing the security posture of our CI/CD pipeline. Refer to the official GCP IAM documentation for setting up the required WIF pool and provider.

Best Practices for Iterative Development and Cost Control

A successful **Rapid Prototyping GCP** pipeline isn’t just about deployment; it’s about making iteration cheap and fast, and managing the associated cloud costs.

Rapid Iteration with Streamlit’s Application State

Leverage Streamlit’s native caching mechanisms (e.g., `@st.cache_data`, `@st.cache_resource`) and session state (`st.session_state`) effectively. This prevents re-running expensive computations (like model loading or large data fetches) on every user interaction, reducing application latency and improving the perceived speed of the prototype.

Cost Management with Cloud Run

- Scale-to-Zero: Ensure your Cloud Run service is configured to scale down to 0 minimum instances (`min-instances: 0`). This is crucial. If the prototype isn’t being actively viewed, you pay nothing for compute time.

- Resource Limits: Start with the lowest possible CPU/Memory allocation (e.g., 1vCPU, 512MiB) and increase only if necessary. Prototypes should be cost-aware.

- Terraform Taint: For temporary projects, use `terraform destroy` when validation is complete. For environments that must persist, use `terraform taint` or manual deletion on the service, and a follow-up `terraform apply` to re-create it when needed.

Frequently Asked Questions (FAQ)

How is this Rapid Prototyping stack different from using App Engine or GKE?

The key difference is **operational overhead and cost**. App Engine (Standard) is limited by language runtimes, and GKE (Kubernetes) introduces significant complexity (managing nodes, deployments, services, ingress) that is unnecessary for a rapid proof-of-concept. Cloud Run is a fully managed container platform that handles autoscaling, patching, and networking, allowing you to focus purely on the application logic for your prototype.

What are the security implications of making the Cloud Run service unauthenticated?

Making the service public (`allow-unauthenticated`) is acceptable for internal or temporary prototypes, as it simplifies sharing. For prototypes that handle sensitive data or move toward production, you must update the Terraform configuration to remove the public access IAM policy and enforce authentication (e.g., using IAP or requiring a valid GCP identity token).

Can I use Cloud Build instead of GitHub Actions for this CI/CD?

Absolutely. Cloud Build is GCP’s native CI/CD platform and can be a faster alternative, especially for image builds that stay within the Google Cloud network. The GitHub Actions approach was chosen here for its seamless integration with the source control repository (GitHub) and its broad community support, simplifying the adoption for teams already using GitHub.

Conclusion

Building a modern **Rapid Prototyping GCP** pipeline requires a holistic view of the entire software lifecycle. By coupling the declarative power of **Terraform** with the automation of **GitHub Actions** and the serverless execution of **Cloud Run**, you gain an unparalleled ability to quickly validate ideas. This blueprint empowers expert DevOps teams and SREs to dramatically reduce the time-to-market for data applications and machine learning models, moving from concept to deployed, interactive prototype in minutes, not days. Thank you for reading the DevopsRoles page!