Introduction

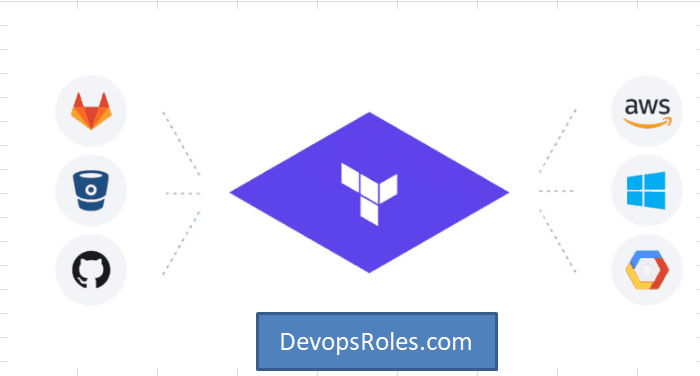

In the world of cloud infrastructure, managing Kubernetes clusters efficiently is crucial for smooth operations and scaling. One powerful tool that simplifies this process is Terraform, an open-source infrastructure as code software. When integrated with Amazon Elastic Kubernetes Service (EKS), Terraform helps automate the creation, configuration, and management of Kubernetes clusters, making it easier to deploy applications at scale.

In this guide, we’ll focus on one specific feature: Terraform EKS Automode. This feature allows for automatic management of certain aspects of an EKS cluster, optimizing workflows and reducing manual intervention. Whether you’re a beginner or an experienced user, this article will walk you through the benefits, setup process, and examples of using Terraform to manage your EKS clusters in automode.

What is Terraform EKS Automode?

Before diving into its usage, let’s define Terraform EKS Automode. Automode is a feature within the Terraform EKS module that allows you to automate various configurations within the EKS service, such as node group management, VPC configuration, and the integration of other AWS resources like IAM roles and security groups.

By leveraging this feature, users can reduce the complexity of managing EKS clusters manually. It helps you automate the creation of EKS clusters and ensures that node groups are automatically set up based on your defined requirements. Terraform automates these tasks, reducing errors and improving the efficiency of your deployment pipeline.

Benefits of Using Terraform EKS Automode

1. Simplified Cluster Management

Automating the management of your EKS clusters ensures that all the resources are properly configured without the need for manual intervention. Terraform’s EKS automode integrates directly with AWS APIs to handle tasks like VPC setup, node group creation, and IAM role assignments.

2. Scalability

Terraform’s automode feature helps with scaling your EKS clusters based on resource demand. You can easily define the node group sizes and other configurations to handle traffic spikes and scale down when demand decreases.

3. Version Control and Reusability

Terraform allows you to store your infrastructure code in version control systems like GitHub, making it easy to manage and reuse across different environments or teams.

4. Cost Efficiency

By automating cluster management and scaling, you ensure that you are using resources optimally, which helps reduce over-provisioning and unnecessary costs.

How to Set Up Terraform EKS Automode

To start using Terraform EKS Automode, you’ll first need to set up a few prerequisites:

Prerequisites:

- Terraform: Installed and configured on your local machine or CI/CD pipeline.

- AWS CLI: Configured with necessary permissions.

- AWS Account: An active AWS account with appropriate IAM permissions for managing EKS, EC2, and other AWS resources.

- Kubernetes CLI (kubectl): Installed to interact with the EKS cluster.

Step-by-Step Setup Guide

1. Define Terraform Provider

In your Terraform configuration file, begin by defining the AWS provider:

provider "aws" {

region = "us-west-2"

}

2. Create EKS Cluster Resource

Next, define the eks_cluster resource in your Terraform configuration:

resource "aws_eks_cluster" "example" {

name = "example-cluster"

role_arn = aws_iam_role.eks_cluster_role.arn

vpc_config {

subnet_ids = aws_subnet.example.*.id

}

# Enable EKS Automode

enable_configure_automode = true

}

The enable_configure_automode argument enables Automode, which will help with the automatic setup of node groups, networking, and other essential configurations.

3. Define Node Groups

The next step is to define node groups that Terraform will automatically manage. A node group is a group of EC2 instances that run the Kubernetes workloads. You can use aws_eks_node_group to manage this.

resource "aws_eks_node_group" "example" {

cluster_name = aws_eks_cluster.example.name

node_group_name = "example-node-group"

node_role_arn = aws_iam_role.eks_node_role.arn

subnet_ids = aws_subnet.example.*.id

scaling_config {

desired_size = 2

min_size = 1

max_size = 3

}

# Automatically configure with EKS Automode

enable_auto_scaling = true

}

Here, enable_auto_scaling enables the automatic scaling of node groups based on resource utilization, a key feature in EKS Automode.

4. Apply the Terraform Configuration

Once your Terraform configuration is set up, run the following commands to apply the changes:

terraform init

terraform apply

This will create the EKS cluster and automatically configure the node groups and other related resources.

Example 1: Basic Terraform EKS Automode Setup

To give you a better understanding, here’s a simple example of a full Terraform script that automates the creation of an EKS cluster, a node group, and required networking components:

provider "aws" {

region = "us-west-2"

}

resource "aws_vpc" "example" {

cidr_block = "10.0.0.0/16"

}

resource "aws_subnet" "example" {

vpc_id = aws_vpc.example.id

cidr_block = "10.0.1.0/24"

availability_zone = "us-west-2a"

}

resource "aws_iam_role" "eks_cluster_role" {

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [

{

Action = "sts:AssumeRole"

Principal = {

Service = "eks.amazonaws.com"

}

Effect = "Allow"

Sid = ""

},

]

})

}

resource "aws_eks_cluster" "example" {

name = "example-cluster"

role_arn = aws_iam_role.eks_cluster_role.arn

vpc_config {

subnet_ids = [aws_subnet.example.id]

}

enable_configure_automode = true

}

This script automatically creates a basic EKS cluster along with the necessary networking setup.

Advanced Scenarios for Terraform EKS Automode

Automating Multi-Region Deployments

Terraform EKS Automode can also help automate cluster deployments across multiple regions. This involves setting up different configurations for each region and using Terraform modules to manage the complexity.

Integrating with CI/CD Pipelines

You can integrate Terraform EKS Automode into your CI/CD pipeline for continuous delivery. By automating the deployment of EKS clusters, you can reduce human error and ensure that every new environment follows the same configuration standards.

FAQs About Terraform EKS Automode

1. What is EKS Automode?

EKS Automode is a feature in Terraform that automates the creation and management of Amazon EKS clusters, including node group creation, VPC configuration, and scaling.

2. How do I enable Terraform EKS Automode?

To enable Automode, use the enable_configure_automode parameter in the aws_eks_cluster resource definition.

3. Can Terraform EKS Automode help with auto-scaling?

Yes, Automode enables automatic scaling of node groups based on defined criteria such as resource utilization, ensuring that your cluster adapts to workload changes without manual intervention.

4. Do I need to configure anything manually with Automode?

While Automode automates most of the tasks, you may need to define some basic configurations such as VPC setup, IAM roles, and node group parameters based on your specific requirements.

External Links

- Official Terraform AWS Provider Documentation

- AWS EKS Official Documentation

- Kubernetes Documentation

Conclusion

In this guide, we’ve explored how to use Terraform EKS Automode to simplify the creation and management of Amazon EKS clusters. By automating key components like node groups and VPC configurations, Terraform helps reduce complexity, scale resources efficiently, and optimize costs.

With Terraform’s EKS Automode, you can focus more on your application deployments and less on managing infrastructure, knowing that your Kubernetes clusters are being managed efficiently in the background. Thank you for reading the DevopsRoles page!