The pace of AI adoption has fundamentally reshaped the enterprise landscape. Every department, from marketing to R&D, is now attempting to leverage machine learning to gain a competitive edge. This decentralized enthusiasm, however, has created a massive governance gap. When AI models and data pipelines are built and deployed outside of established IT governance—what we call Shadow AI—the resulting security exposure can be catastrophic.

For senior DevOps, MLOps, and SecOps engineers, understanding the architectural implications of Shadow AI is no longer optional; it is mission-critical. This deep dive will move beyond simple awareness, providing actionable, senior-level strategies to identify, mitigate, and govern these hidden security risks.

Table of Contents

Phase 1: Understanding the Architecture of Shadow AI Risks

Shadow AI is not merely a compliance problem; it is an architectural failure. It occurs when business units develop bespoke AI solutions using corporate data and infrastructure without the oversight of central security or platform teams.

The core problem lies in the Model Lifecycle Management (MLOps) gap. When development teams bypass the established CI/CD pipeline, they introduce unknown variables into the production environment. These variables include unvetted dependencies, unpatched libraries, and data pipelines with unknown lineage.

The Anatomy of the Risk

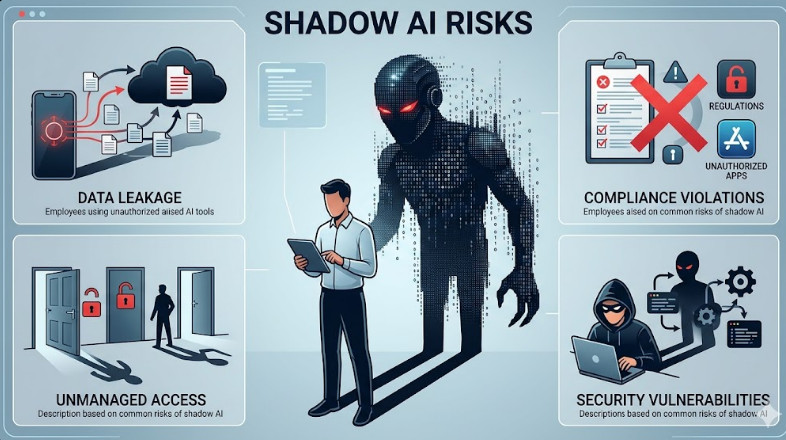

At an architectural level, Shadow AI introduces three primary vectors of risk:

- Data Leakage and Poisoning: Models are trained on sensitive, often unanonymized, data. If the data ingestion pipeline is unsecured, the model itself becomes a vector for data exfiltration or, worse, poisoning, where malicious inputs subtly shift the model’s decision boundary.

- Model Insecurity (Adversarial Attacks): Since these models are often deployed quickly, they may lack robust defenses against adversarial inputs. An attacker doesn’t need to steal the model weights; they only need to understand its input space to trick it into making incorrect, high-impact decisions.

- Operational Blind Spots: Without centralized logging and monitoring, it is impossible to audit who is using the model, what inputs are being fed, or what the model’s drift rate is in a production setting.

Understanding these core vectors is the first step toward building a robust MLSecOps framework. If you need to learn more about the general threat landscape, you can learn about shadow AI risks.

Phase 2: Practical Implementation – Building the Guardrails

Mitigating Shadow AI risks requires shifting from a reactive security posture to a proactive, platform-centric governance model. The goal is not to ban AI development, but to containerize, catalog, and secure it.

Strategy 1: Centralized Model Cataloging and Registry

The single most effective technical control is establishing a mandatory, centralized Model Registry. This registry acts as the single source of truth for every model, regardless of which team built it.

Every model artifact must pass through this registry, which enforces mandatory metadata, including:

- Data Lineage: Which specific datasets (and versions) were used for training.

- Dependency Manifest: A complete list of all required libraries and their pinned versions.

- Security Scan Report: Evidence of vulnerability scanning (SAST/DAST) on the model code and environment.

Implementation Example: Enforcing Model Registration via Policy

We can use a policy engine (like Open Policy Agent – OPA) integrated into the CI/CD pipeline to enforce that no model artifact can be deployed without passing through the registry.

# OPA Policy snippet for Model Deployment Gate

module: model_deployment_policy

deny:

- condition: "input.model_id not in registry_lookup(input.model_id)"

message: "Deployment blocked. Model ID must be registered and approved."

- condition: "input.security_scan_status != 'CLEAN'"

message: "Deployment blocked. Model requires remediation before promotion."

Strategy 2: API Gateway Enforcement and Runtime Monitoring

Never expose a model directly. All model endpoints must be wrapped and managed by a robust API Gateway. The gateway serves as the primary choke point for security and governance.

The gateway must enforce several critical functions:

- Input Validation: Schema validation and sanitization to prevent malformed or malicious inputs.

- Rate Limiting & Throttling: Protection against Denial of Service (DoS) attacks.

- Authentication/Authorization: Ensuring only authorized services can call the endpoint.

Furthermore, runtime monitoring must track model performance against established baselines. This is where Model Drift Detection becomes crucial. If the input data distribution shifts significantly from the training data, the model’s reliability degrades, and this must trigger an immediate alert and potentially an automated rollback.

Implementation Example: Monitoring Data Drift

A monitoring service should continuously calculate the statistical distance (e.g., using Jensen-Shannon Divergence) between the incoming production data distribution and the baseline training data distribution.

import numpy as np

from scipy.stats import wasserstein_distance

def check_drift(production_data, baseline_data, feature_index):

"""Calculates Wasserstein distance for a given feature."""

# Assuming data is normalized and comparable

distance = wasserstein_distance(

production_data[:, feature_index],

baseline_data[:, feature_index]

)

if distance > THRESHOLD_DRIFT:

print(f"ALERT: Feature {feature_index} has drifted significantly. Distance: {distance:.4f}")

return False

return True

💡 Pro Tip: To truly secure your MLOps pipeline, integrate Confidential Computing (e.g., using Intel SGX or AMD SEV). This ensures that even if the underlying cloud infrastructure is compromised, the model inference and the input data remain encrypted in memory, mitigating risks associated with physical or hypervisor-level attacks.

Phase 3: Senior-Level Best Practices and Governance

The technical controls above address the how of securing the model. This phase addresses the why and the who—the governance and organizational structure required to eliminate the root cause of Shadow AI.

1. Implementing MLSecOps as a Culture, Not a Tool

MLSecOps must be treated as a continuous process, not a checklist. It requires embedding security and compliance experts directly into the development teams. This shifts the mindset from “security as a gate” to “security as an enabler.”

Key components of a mature MLSecOps framework include:

- Automated Data Lineage Tracking: Every transformation, aggregation, and feature engineering step must be logged and traceable back to the raw source data.

- Bias and Fairness Auditing: Before deployment, models must be tested against protected attributes (race, gender, etc.) to ensure they do not perpetuate systemic bias, which is a significant ethical and regulatory risk.

- Explainability (XAI): Implementing tools like SHAP or LIME is mandatory. If a model cannot explain why it made a decision, it cannot be trusted in a high-stakes enterprise environment.

2. Addressing Advanced Threats: Prompt Injection and Data Poisoning

As LLMs become prevalent, the threat surface expands dramatically. The primary concern is Prompt Injection, where a user inputs malicious instructions designed to override the model’s system prompt or security guardrails.

Mitigation requires a layered defense:

- Input Sanitization: Treat all user inputs as untrusted data. Use dedicated LLM firewalls that analyze prompts for known injection patterns.

- System Prompt Hardening: Use guardrail models that sit in front of the primary LLM. These guardrails validate the user’s request against defined policies before it ever reaches the core model.

3. The Role of the Platform Team

The platform team must evolve from being mere infrastructure providers to being AI Governance Enablers. They must provide self-service, secure, and compliant tools that make it easier for developers to build models correctly than to build them insecurely.

This involves offering standardized, pre-vetted components for data ingestion, feature stores, and model serving, thereby eliminating the need for teams to build their own risky, custom pipelines. If your organization is struggling with defining these roles, reviewing best practices in DevOps roles can provide valuable organizational context.

💡 Pro Tip: When evaluating third-party AI services, never rely solely on their stated security compliance (e.g., SOC 2). Always demand evidence of data residency controls and zero-trust architecture implementation. Assume the service provider’s environment is compromised and design your integration layer accordingly.

Conclusion: From Shadow to Guarded AI

Shadow AI risks are a direct consequence of the friction between rapid innovation and mature governance. By implementing mandatory Model Registries, enforcing strict API Gateway controls, and embedding MLSecOps principles into the DNA of your platform, enterprises can harness the immense power of AI without sacrificing security or compliance.

Securing AI is not a single project; it is a continuous, architectural commitment to governance.