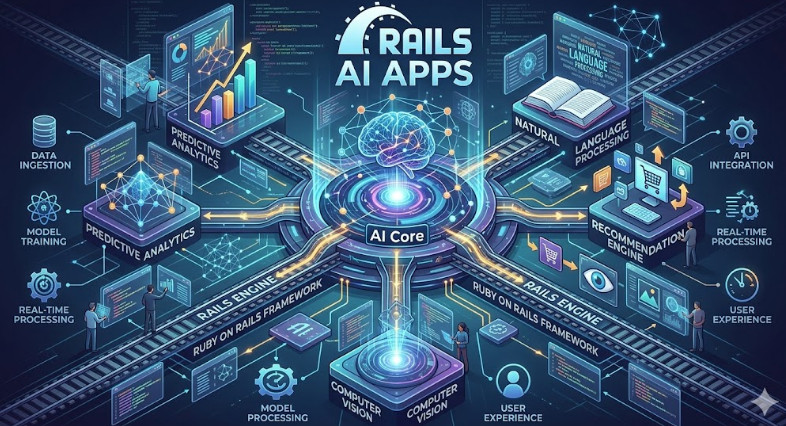

The modern application stack is inherently complex. When you introduce Machine Learning models and sophisticated AI components, the deployment challenge escalates exponentially. We are no longer simply deploying CRUD applications; we are deploying Rails AI Apps – systems that require robust dependency management, specialized runtime environments, and flawless uptime guarantees.

For Senior DevOps and MLOps engineers, the goal isn’t just to get the code running; it’s to achieve zero-downtime deployment while maintaining rigorous security and scalability. Traditional deployment methods often introduce unacceptable service interruptions or manual toil.

This comprehensive guide will walk you through the architecture, implementation, and advanced best practices required to deploy sophisticated Rails AI Apps onto a Virtual Private Server (VPS) using Kamal. We will leverage the power of Docker and SSH to create a resilient, automated, and highly efficient CI/CD pipeline.

Table of Contents

Phase 1: Understanding the Core Architecture and Concepts

Before writing a single line of configuration, we must understand the components and how they interact to guarantee reliability. Our architecture relies on three pillars: Rails, Docker, and Kamal.

The Role of Each Component

- Rails (The Application Layer): This is the core business logic. Since we are dealing with AI, the application often includes specialized gems (like

tensorflow-rubyor custom model wrappers) that dictate specific runtime dependencies. - Docker (The Containerization Layer): Docker solves the “it works on my machine” problem. It packages the application, its dependencies, and the precise runtime environment into an immutable container image. This guarantees parity from development to production.

- Kamal (The Orchestration Layer): Kamal is the deployment tool that sits between your Git repository and your VPS. It manages the entire lifecycle: pulling the latest code, building the Docker image, securely transferring it, and executing the zero-downtime switchover.

The Zero-Downtime Mechanism

Achieving zero downtime is not magic; it is a controlled process of traffic shifting. Kamal facilitates this by ensuring the new container version is fully healthy and ready to accept traffic before the old container is gracefully decommissioned.

This typically involves:

- Blue/Green or Canary Deployment: Kamal manages this switchover by updating the load balancer (or Nginx/Traefik proxy) to point to the new container instance only after health checks pass.

- Database Migrations: Crucially, database migrations must be non-blocking. We must use techniques like decoupling migrations or running them against a staging replica first.

💡 Pro Tip: When architecting for Rails AI Apps, never treat the AI model serving as a simple background job. Treat it as a critical, stateful service. Use dedicated containers or sidecars (like Istio/Linkerd) to manage model loading, versioning, and resource allocation, isolating it from the core Rails API container.

Phase 2: Practical Implementation – Setting up the Pipeline

This phase details the hands-on steps to configure Kamal for a robust deployment.

Step 2.1: Containerizing the Rails AI App

The first step is creating a multi-stage Dockerfile. This is critical for keeping the final image small and secure, eliminating build-time dependencies from the production runtime.

We assume your Gemfile includes all necessary AI dependencies.

# Stage 1: Builder Stage (Install dependencies)

FROM ruby:3.2.2-slim as builder

WORKDIR /app

COPY Gemfile Gemfile.lock ./

bundle install --jobs 4 --retry 3

# Copy application code and precompile assets

COPY . .

RAILS_ENV=production bundle exec rails assets:precompile

# Stage 2: Production Stage (Minimal runtime)

FROM ruby:3.2.2-slim

WORKDIR /app

# Copy only necessary artifacts from the builder stage

COPY --from=builder /usr/local/bundle /usr/local/bundle

COPY --from=builder /app/public/assets /app/public/assets

COPY --from=builder /app /app

# Set entrypoint and user for security

USER appuser

ENTRYPOINT ["bundle", "exec", "rails", "server", "-b", "0.0.0.0"]

Step 2.2: Configuring Kamal

Kamal uses a YAML file (kamal.yml) to define the deployment target, environment variables, and service configurations.

Here is a sample kamal.yml for a production VPS setup:

# kamal.yml

app:

# The name of your Docker image

image: registry.example.com/rails-ai-app:$COMMIT_SHA

# The port the Rails app will listen on

port: 3000

# The number of replicas for high availability

replicas: 2

# Define the necessary environment variables for the AI model

env:

RAILS_ENV: production

DATABASE_URL: <%= ENV['DATABASE_URL'] %>

AI_MODEL_PATH: /models/v2.1/model.bin

SECRET_KEY_BASE: <%= ENV['SECRET_KEY_BASE'] %>

# Define the infrastructure target

production:

host: your_vps_ip_address

user: deployuser

# Define the service type (e.g., docker-compose, k8s)

service: docker

# Define the necessary ports to expose

ports:

- http: 80

- https: 443

Step 2.3: The Deployment Workflow

Once configured, the deployment process is initiated via the command line:

# 1. Build the image locally and push it to the registry

docker build -t registry.example.com/rails-ai-app:latest .

docker push registry.example.com/rails-ai-app:latest

# 2. Run Kamal to deploy the image to the VPS

kamal deploy production

Kamal handles the rest: connecting via SSH, pulling the image, stopping the old container gracefully, starting the new container, and updating the load balancer—all while ensuring the Rails AI Apps remain accessible.

Phase 3: Senior-Level Best Practices, Security, and Scaling

For senior engineers, the goal is not just deployment, but operational excellence. This requires addressing security, resource management, and failure modes.

3.1 Advanced Security Hardening (SecOps Focus)

Security must be baked into the deployment process, not bolted on afterward.

- Secrets Management: Never store sensitive credentials (API keys, database passwords) directly in

kamal.ymlor environment variables. Use a dedicated vault solution like HashiCorp Vault or AWS Secrets Manager. Kamal should be configured to pull these secrets dynamically at runtime. - Network Segmentation: The AI model service should ideally run in a separate, private subnet. Only the API gateway should have access to the model endpoint. This limits the blast radius if the core Rails application is compromised.

- Least Privilege Principle: Ensure the container runs as a non-root user (as shown in the

Dockerfileexample). Thedeployuseron the VPS should only have permissions necessary to interact with Docker and the necessary directories.

3.2 Performance and Resource Optimization (MLOps Focus)

Rails AI Apps are resource hogs. Proper resource allocation is non-negotiable for stability.

- Resource Limits: In your

docker-composeor Kubernetes manifests (which Kamal abstracts), always define explicitcpu_sharesandmemory_limits. If the AI model spikes memory usage, it must fail gracefully and restart, rather than crashing the entire host. - Health Checks: Implement detailed Liveness and Readiness probes. A simple HTTP 200 status is insufficient. The readiness check must confirm that the AI model has successfully loaded into memory and is ready to accept inference requests.

# Example Readiness Probe (Conceptual)

readiness_probe:

path: /health/model_ready

initial_delay: 30s

period: 10s

timeout: 5s

3.3 Troubleshooting and Resilience

What happens when a deployment fails?

- Automated Rollbacks: Kamal inherently supports rollbacks. If the health checks fail after a deployment, the system should automatically revert to the last known good state (the previous container version). Always test this rollback mechanism.

- Database Migration Safety: For complex migrations involving AI data structures, use a phased migration approach. First, add the new columns/tables (non-blocking). Second, deploy the new code that writes to both old and new structures (dual-write). Third, run a cleanup script to remove old structures.

💡 Pro Tip: When dealing with large, binary AI models, do not bake them into the Docker image. Instead, mount them as a read-only volume from a centralized storage solution (like S3 or MinIO). This allows you to update the model version without rebuilding the entire container image, drastically speeding up deployment cycles.

Conclusion: Mastering the AI Deployment Lifecycle

Deploying Rails AI Apps is a sophisticated exercise in modern DevOps practices. By mastering the interplay between Docker’s immutability, Kamal’s orchestration power, and rigorous security hardening, you can achieve a truly resilient, zero-downtime platform.

If your team is looking to deepen its expertise in modern deployment patterns, understanding the roles covered in DevOps roles can provide a valuable roadmap. By adopting these advanced techniques, your organization can move beyond simple hosting and achieve true platform reliability for its most complex AI initiatives.