Table of Contents

Architecting for Scale: Mastering Modern Inference Providers in MLOps

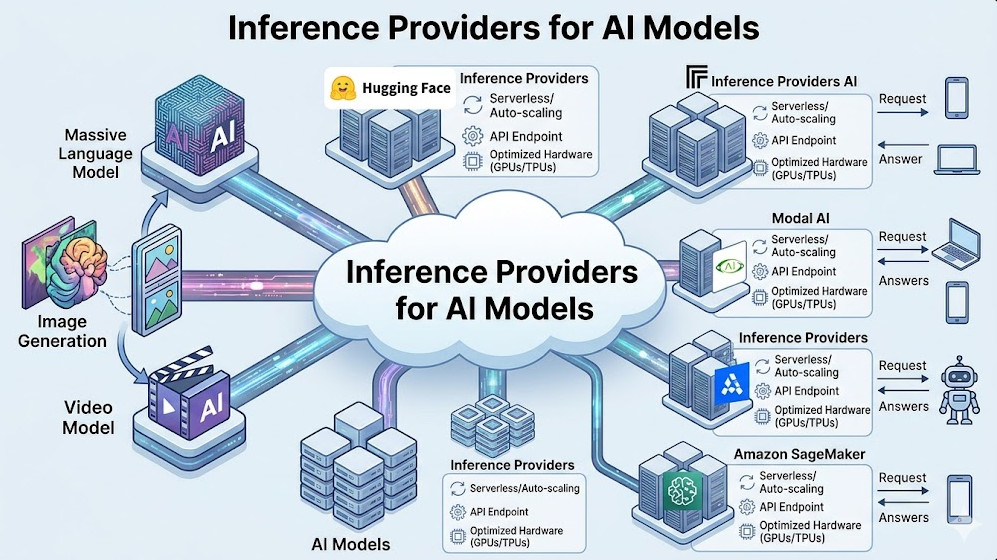

The deployment of sophisticated AI models—from large language models (LLMs) to complex computer vision systems—has become the defining challenge of modern DevOps. Building a model is only half the battle; ensuring it can handle production-grade traffic with low latency, high uptime, and optimal cost efficiency requires a specialized layer: the Inference Provider.

For senior MLOps and AI engineers, simply calling an API endpoint is insufficient. You must architect the entire serving infrastructure. This deep dive will move beyond basic tutorials. We will explore the architectural nuances, performance tuning parameters, and critical SecOps best practices required to select, configure, and optimize enterprise-grade Inference Providers.

If your current deployment strategy struggles with fluctuating load, unpredictable latency spikes, or escalating cloud costs, this guide provides the blueprint for a robust, scalable solution.

Phase 1: Understanding the Inference Provider Landscape

What exactly is an Inference Provider? At its core, it is the optimized, scalable runtime environment responsible for taking a serialized model artifact (e.g., PyTorch, TensorFlow) and executing predictions (inference) under controlled, high-throughput conditions.

A naive deployment often involves simply wrapping the model in a basic Flask or FastAPI endpoint. While functional for prototypes, this approach fails under real-world stress. Production requires specialized tooling that manages resource allocation, batching, and GPU utilization at the kernel level.

The Core Architectural Decision: Self-Host vs. Managed Service

The first critical decision is the hosting model.

- Self-Hosting (e.g., Kubernetes + Triton/TorchServe): This offers maximum control over the stack, allowing granular tuning of every parameter—from networking policies to CUDA versions. It is ideal for organizations with mature DevOps teams and strict compliance needs. However, it introduces significant operational overhead.

- Managed Providers (e.g., DeepInfra, Replicate, Hugging Face Inference Endpoints): These services abstract away much of the underlying infrastructure complexity. They handle scaling, load balancing, and often provide built-in optimization layers (like quantization support). This drastically reduces time-to-market but requires careful validation of vendor lock-in and customization limits.

When evaluating Inference Providers, engineers must benchmark not just the average latency, but the P99 latency and the cost-per-inference under peak load.

💡 Pro Tip: When comparing self-hosted solutions versus managed services, always model the Total Cost of Ownership (TCO). A managed service might have a higher per-call cost, but if it eliminates the need for dedicated SRE staff to manage Kubernetes upgrades, GPU drivers, and scaling policies, the TCO can be significantly lower.

Phase 2: Practical Implementation Deep Dive – Optimizing the Pipeline

Let’s focus on the mechanics of optimization, using the concept of a specialized provider like DeepInfra, which integrates directly into the Hugging Face ecosystem.

The goal is to achieve maximum throughput while maintaining acceptable latency. This requires optimizing the model artifact itself and the serving parameters.

Model Optimization Techniques

Before deployment, the model must undergo optimization:

- Quantization: Reducing the precision of model weights (e.g., from FP32 to INT8). This dramatically reduces model size and memory bandwidth requirements, often yielding significant speedups with minimal accuracy loss.

- Graph Compilation: Using tools like ONNX Runtime or TorchScript to compile the model graph, removing Python overhead and allowing the runtime to execute highly optimized, low-level operations.

- Batching: The most critical performance lever. Instead of processing requests sequentially (batch size = 1), the Inference Provider should aggregate multiple incoming requests into a single batch. This maximizes GPU utilization, as GPUs are designed for parallel processing.

Configuring the Endpoint

When utilizing a sophisticated Inference Provider, the configuration goes far beyond simply pointing to the model ID. You must define resource constraints and scaling policies.

Consider the following conceptual YAML configuration for a high-availability endpoint:

# deployment_config.yaml

apiVersion: mlo.devops.com/v1

kind: InferenceEndpoint

metadata:

name: llm-optimized-service

spec:

model_id: deepinfra/llama-7b-quant

resources:

gpu_type: nvidia-a100

min_replicas: 2

max_replicas: 10

performance_tuning:

batch_size: 8 # Crucial for maximizing GPU utilization

quantization_level: int8

p99_latency_target_ms: 150

security:

auth_scope: oauth2

rate_limit: 1000 # requests per minute

This configuration dictates that the service must maintain at least two replicas, scale up to ten under load, and, crucially, process requests in batches of eight to maximize the utilization of the expensive A100 GPU resources.

For those looking at specialized, high-performance deployments, reviewing the capabilities of services like DeepInfra on Hugging Face provides excellent real-world examples of these optimization layers in action.

Phase 3: Senior-Level Best Practices, SecOps, and Resilience

For senior engineers, the focus shifts from “Does it work?” to “How reliable, secure, and cost-effective is it at 10x scale?”

1. SecOps Hardening and Zero Trust

The model endpoint is a high-value target. Treat it as a critical API gateway.

- Authentication: Never rely solely on API keys. Implement OAuth 2.0 or JWT validation at the API Gateway level.

- Network Segmentation: The endpoint should reside in a private subnet, accessible only via a secured Service Mesh (e.g., Istio).

- Input Validation: Implement strict schema validation and rate limiting. Malformed inputs can trigger resource exhaustion attacks.

2. Advanced Deployment Strategies

Relying on a single, monolithic deployment is a single point of failure.

- Canary Deployments: When updating the model, deploy the new version (v2) to a small subset of traffic (e.g., 5%). Monitor key metrics (error rate, latency, throughput) against the stable version (v1). Only promote v2 if performance metrics meet the defined SLOs.

- Shadow Deployment: Run the new model (v2) in parallel with the production model (v1), feeding it a copy of the live production traffic. This allows you to test v2’s performance and stability under real load without impacting the user experience.

3. Monitoring and Observability

Monitoring must be multi-dimensional. You need to track:

- Business Metrics: Success rate, total requests, revenue generated.

- Operational Metrics: CPU/GPU utilization, memory usage, request queue depth.

- Model Metrics: Data Drift (is the input data distribution changing?), Concept Drift (is the relationship between input and output changing?), and prediction confidence scores.

A robust monitoring stack (Prometheus/Grafana) should trigger automated rollbacks if any critical metric deviates outside the established tolerance band.

# Example: Automated rollback script triggered by high P99 latency

if [ "$P99_LATENCY_MS" -gt 200 ]; then

echo "CRITICAL: P99 latency exceeded 200ms. Initiating rollback."

kubectl set image deployment/llm-optimized-service llm-container=v1.0.0

echo "Rollback to stable version v1.0.0 complete."

else

echo "Latency within acceptable bounds. Monitoring continues."

fi

💡 Pro Tip: Implement automated resource scaling based on predicted load, not just current load. By integrating historical traffic patterns and external event calendars (e.g., marketing campaigns), you can preemptively scale up replicas minutes before the traffic surge hits, eliminating cold-start latency.

Conclusion: The Future of Inference

The evolution of Inference Providers is inextricably linked to the advancement of model size and complexity. As models become multimodal and larger (approaching trillion parameters), the need for specialized, highly optimized serving infrastructure becomes paramount.

By mastering the interplay between architectural choice (self-host vs. managed), deep performance tuning (quantization, batching), and rigorous SecOps practices (Canary deployments, Zero Trust), you move beyond simply deploying models. You build resilient, enterprise-grade AI platforms.

For further professional development and understanding the roles required to manage these complex systems, explore career paths at https://www.devopsroles.com/. The ability to manage the entire lifecycle—from training to optimized inference—is the hallmark of a modern MLOps engineer.