The modern software development lifecycle (SDLC) is characterized by complexity. We no longer deal with monolithic applications; we manage microservices, AI models, and highly specialized security tooling. This proliferation of tools—from static analysis security testing (SAST) engines to dedicated AI vulnerability scanners—creates a significant integration challenge.

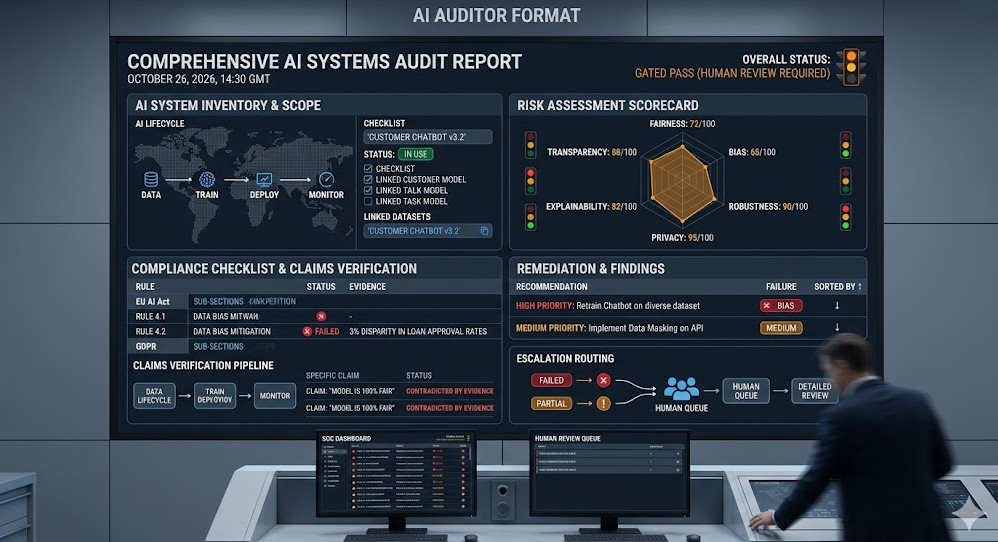

How do you ensure that the findings from a specialized, proprietary AI auditor are consumed, displayed, and acted upon consistently within a standard platform like GitHub? The answer lies in standardization: the AI auditor format.

This deep-dive guide is engineered for Senior DevOps, MLOps, and SecOps engineers. We will move beyond conceptual understanding to provide a hands-on, architecturally sound blueprint for integrating specialized AI scanning results into your core CI/CD workflow using the industry-standard SARIF format.

Table of Contents

Phase 1: The Architectural Imperative – Why Standardization Matters

Before diving into YAML and JSON, we must understand the problem space. Every security tool speaks a different language. A traditional SAST tool might output XML, a dependency scanner might use proprietary JSON, and a specialized AI model auditor might generate a unique, custom report.

If your CI/CD pipeline has to write custom parsers for every single tool, your pipeline becomes brittle, unmaintainable, and exponentially expensive to scale. This is the architectural bottleneck we must solve.

What is SARIF and Why is it the Gold Standard?

SARIF (Static Analysis Results Interchange Format) is not just another JSON schema; it is a semantic representation designed specifically to unify security findings. It provides a structured, vendor-agnostic way to report vulnerabilities, including metadata like severity, file location, and suggested remediation steps.

The AI auditor format is essentially the specialized implementation of SARIF used when the source of the findings is an advanced, AI-driven analysis engine (e.g., analyzing model drift, data poisoning vectors, or complex logic flaws).

SARIF mandates specific fields, including ruleId, level (severity), and locations. By adhering to this structure, GitHub (and other consuming platforms) can reliably interpret the findings regardless of the underlying scanning technology.

The Role of the AI Auditor in the Pipeline

In a typical MLOps workflow, the AI auditor doesn’t just scan code; it scans the context of the code. It might analyze the training data dependencies, the model architecture, or the inference endpoints for vulnerabilities that traditional SAST tools miss.

The output of this specialized audit must therefore be packaged into the AI auditor format (SARIF) to ensure it can be treated as a first-class citizen alongside standard code vulnerabilities.

💡 Pro Tip: When designing your pipeline, treat the SARIF generation step as a critical, version-controlled artifact. Do not allow the generation logic to be ad-hoc; it must be a dedicated, testable microservice responsible solely for mapping proprietary findings into the standardized SARIF schema.

Phase 2: Practical Implementation – Integrating SARIF into CI/CD

Integrating the AI auditor format requires careful orchestration within your CI/CD system. We are moving from a “run scanner, get report” mentality to a “run scanner, generate standardized artifact” mentality.

Step 1: The Auditing Tool Output

Assume you have a proprietary AI auditor that outputs its raw findings in a structured, but non-SARIF, format (e.g., a custom JSON payload).

The first step is to build a dedicated SARIF converter script. This script must ingest the raw findings and map them meticulously to the SARIF schema. This involves mapping custom severity levels (e.g., AI_CRITICAL) to standardized SARIF levels (e.g., error).

Step 2: The Conversion Script (Conceptual Example)

While the actual conversion logic is highly dependent on your source format, the principle is clear: transforming proprietary data into the standardized structure.

#!/bin/bash

# Assuming 'raw_ai_report.json' is the output from the proprietary AI auditor

# This script simulates the conversion process using a dedicated Python library

# that handles the complex schema mapping.

RAW_REPORT_PATH="raw_ai_report.json"

OUTPUT_SARIF_PATH="sarif_ai_findings.json"

echo "Starting SARIF conversion for AI auditor findings..."

# In a real-world scenario, this would call a dedicated Python/Go library

# that handles the complex JSON schema mapping.

python convert_to_sarif.py --input $RAW_REPORT_PATH --output $OUTPUT_SARIF_PATH

if [ $? -eq 0 ]; then

echo "Successfully generated SARIF artifact at $OUTPUT_SARIF_PATH"

else

echo "ERROR: SARIF conversion failed. Check mapping logic."

exit 1

fi

Step 3: Publishing the Artifact to GitHub

Once the sarif_ai_findings.json artifact is generated, the final step is to publish it to GitHub. GitHub Actions provides specific mechanisms for consuming SARIF artifacts, allowing the findings to appear directly in the “Security” tab of your repository.

This process is typically executed as a final step in your CI/CD workflow, ensuring that the standardized report is available for review alongside other security findings.

# .github/workflows/security_scan.yml

name: DevSecOps Scan Pipeline

on: [push]

jobs:

security_audit:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

# Step 1: Run the proprietary AI Auditor

- name: Run AI Auditor Scan

run: |

# This command executes the specialized AI auditor

./run_ai_auditor.sh --target $GITHUB_SHA --output raw_ai_report.json

# Step 2: Convert raw findings to standardized SARIF format

- name: Convert to SARIF Format

run: |

bash ./scripts/convert_to_sarif.sh raw_ai_report.json sarif_ai_findings.json

# Step 3: Publish the SARIF artifact to GitHub

- name: Upload SARIF Results

uses: github/code-scanning/upload-sarif@v3

with:

sarif_file: sarif_ai_findings.json

# Internal link placement for context

- name: Review DevOps Roles

run: echo "For more deep dives into DevSecOps roles, check out the [DevOps Roles resource](https://www.devopsroles.com/)."

Phase 3: Senior-Level Best Practices, Advanced Topics, and Troubleshooting

Achieving successful integration is only half the battle. Maintaining, optimizing, and scaling this process requires senior-level architectural thinking.

Advanced Topic 1: Handling False Positives and Triage

The most significant operational overhead in security scanning is false positive triage. When integrating an AI auditor format, you must build mechanisms to manage this.

Severity Mapping: Never blindly trust the raw severity from the AI tool. Implement a policy layer that maps the AI tool’s internal severity (e.g., ModelRiskLevel_3) to the standard SARIF severity (warning or error). This policy layer should be configurable via a YAML file, allowing security teams to adjust thresholds without touching code.

Suppression Mechanism: The SARIF format supports suppression. Your conversion script must be able to read a list of known false positive IDs (e.g., from a database or a suppressions.yaml file) and inject the necessary suppression metadata into the final SARIF output.

Advanced Topic 2: Orchestration and Policy-as-Code

For enterprise-grade pipelines, the entire security scanning process should be treated as Policy-as-Code. This means the rules governing how the scans run, what thresholds trigger a failure, and how the results are standardized, are all stored in version control.

Consider using a dedicated policy engine (like Open Policy Agent – OPA) to validate the generated SARIF artifact before it is uploaded. OPA can enforce rules like: “If the AI auditor detects a vulnerability in the authentication module, the build must fail, regardless of the severity level.”

Troubleshooting Common SARIF Integration Failures

- Schema Drift: If the AI auditor updates its internal data structure, the SARIF converter script will break. Implement rigorous unit testing on the converter script, simulating schema changes.

- Scope Mismatch: Ensure the

toolandrunobjects within the SARIF are correctly scoped. The consuming platform needs to know which tool generated the finding and which run/commit it applies to. - Data Overload: If your AI auditor generates hundreds of findings, the resulting SARIF file can become massive. Implement sampling or aggregation logic to only report the top N critical findings, keeping the artifact manageable and actionable.

💡 Pro Tip: For large organizations, consider implementing a dedicated Security Data Lake. Instead of just uploading the SARIF artifact to GitHub, stream the raw SARIF data into a centralized data store (like Snowflake or Elasticsearch). This allows you to run historical trend analysis, track remediation velocity, and correlate findings across multiple repositories and different tools simultaneously.

The Value of Unified Reporting

By mastering the AI auditor format and adhering to SARIF, you achieve true DevSecOps maturity. You move from a collection of siloed reports to a single, unified, actionable source of truth.

This standardization is crucial for compliance reporting and auditability. It allows security teams to prove that every piece of code, regardless of how complex the underlying AI logic is, has passed through a standardized, auditable security gate.

For a deeper technical dive into the specifics of the SARIF schema and its implementation, we recommend reviewing the official SARIF format connection guide.

By adopting this structured approach, your CI/CD pipeline becomes not just a build system, but a sophisticated, policy-enforcing security gate. Thank you for reading the DevopsRoles page!