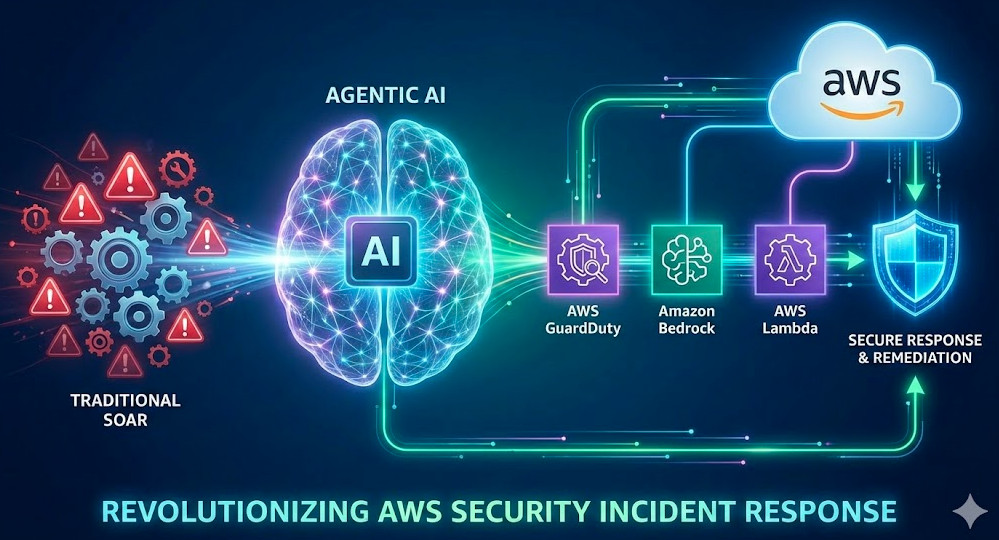

For years, the gold standard in cloud security has been defined by deterministic automation. We detect an anomaly in Amazon GuardDuty, trigger a CloudWatch Event (now EventBridge), and fire a Lambda function to execute a hard-coded remediation script. While effective for known threats, this approach is brittle. It lacks context, reasoning, and adaptability.

Enter Agentic AI. By integrating Large Language Models (LLMs) via services like Amazon Bedrock into your security stack, we are moving from static “Runbooks” to dynamic “Reasoning Engines.” AWS Security Incident Response is no longer just about automation; it is about autonomy. This guide explores how to architect Agentic workflows that can analyze forensics, reason through containment strategies, and execute remediation with human-level nuance at machine speed.

Table of Contents

The Evolution: From SOAR to Agentic Security

Traditional Security Orchestration, Automation, and Response (SOAR) platforms rely on linear logic: If X, then Y. This works for blocking an IP address, but it fails when the threat requires investigation. For example, if an IAM role is exfiltrating data, a standard script might revoke keys immediately—potentially breaking production applications—whereas a human analyst would first check if the activity aligns with a scheduled maintenance window.

Agentic AI introduces the ReAct (Reasoning + Acting) pattern to AWS Security Incident Response. Instead of blindly firing scripts, the AI Agent:

- Observes the finding (e.g., “S3 Bucket Public Access Enabled”).

- Reasons about the context (Queries CloudTrail: “Who did this? Was it authorized?”).

- Acts using defined tools (Calls

boto3functions to correct the policy). - Evaluates the result (Verifies the bucket is private).

GigaCode Pro-Tip:

Don’t confuse “Generative AI” with “Agentic AI.” Generative AI writes a report about the hack. Agentic AI logs into the console (via API) and fixes the hack. The differentiator is the Action Group.

Architecture: Building a Bedrock Security Agent

To modernize your AWS Security Incident Response, we leverage Amazon Bedrock Agents. This managed service orchestrates the interaction between the LLM (reasoning), the knowledge base (RAG for company policies), and the action groups (Lambda functions).

1. The Foundation: Knowledge Bases

Your agent needs context. Using Retrieval-Augmented Generation (RAG), you can index your internal Wiki, incident response playbooks, and architecture diagrams into an Amazon OpenSearch Serverless vector store connected to Bedrock. When a finding occurs, the agent first queries this base: “What is the protocol for a compromised EC2 instance in the Production VPC?”

2. Action Groups (The Hands)

Action groups map OpenAPI schemas to AWS Lambda functions. This allows the LLM to “call” Python code. Below is an example of a remediation tool that an agent might decide to use during an active incident.

Code Implementation: The Isolation Tool

This Lambda function serves as a “tool” that the Bedrock Agent can invoke when it decides an instance must be quarantined.

import boto3

import json

import logging

logger = logging.getLogger()

logger.setLevel(logging.INFO)

ec2 = boto3.client('ec2')

def lambda_handler(event, context):

"""

Tool for Bedrock Agent: Isolates an EC2 instance by attaching a forensic SG.

Input: {'instance_id': 'i-xxxx', 'vpc_id': 'vpc-xxxx'}

"""

agent_params = event.get('parameters', [])

instance_id = next((p['value'] for p in agent_params if p['name'] == 'instance_id'), None)

if not instance_id:

return {"response": "Error: Instance ID is required for isolation."}

try:

# Logic to find or create a 'Forensic-No-Ingress' Security Group

logger.info(f"Agent requested isolation for {instance_id}")

# 1. Get current SG for rollback context (Forensics)

current_attr = ec2.describe_instance_attribute(

InstanceId=instance_id, Attribute='groupSet'

)

# 2. Attach Isolation SG (Assuming sg-isolation-id is pre-provisioned)

isolation_sg = "sg-0123456789abcdef0"

ec2.modify_instance_attribute(

InstanceId=instance_id,

Groups=[isolation_sg]

)

return {

"response": f"SUCCESS: Instance {instance_id} has been isolated. Previous SGs logged for analysis."

}

except Exception as e:

logger.error(f"Failed to isolate: {str(e)}")

return {"response": f"FAILED: Could not isolate instance. Reason: {str(e)}"}

Implementing the Workflow

Deploying this requires an Event-Driven Architecture. Here is the lifecycle of an Agentic AWS Security Incident Response:

- Detection: GuardDuty detects

UnauthorizedAccess:EC2/TorIPCaller. - Ingestion: EventBridge captures the finding and pushes it to an SQS queue (for throttling/buffering).

- Invocation: A Lambda “Controller” picks up the finding and invokes the Bedrock Agent Alias using the

invoke_agentAPI. - Reasoning Loop:

- The Agent receives the finding details.

- It checks the “Knowledge Base” and sees that Tor connections are strictly prohibited.

- It decides to call the

GetInstanceDetailstool to check tags. - It sees the tag

Environment: Production. - It decides to call the

IsolateInstancetool (code above).

- Resolution: The Agent updates AWS Security Hub with the workflow status, marks the finding as

RESOLVED, and emails the SOC team a summary of its actions.

Human-in-the-Loop (HITL) and Guardrails

For expert practitioners, the fear of “hallucinating” agents deleting production databases is real. To mitigate this in AWS Security Incident Response, we implement Guardrails for Amazon Bedrock.

Guardrails allow you to define denied topics and content filters. Furthermore, for high-impact actions (like terminating instances), you should design the Agent to request approval rather than execute immediately. The Agent can send an SNS notification with a standard “Approve/Deny” link. The Agent pauses execution until the approval signal is received via a callback webhook.

Pro-Tip: Use CloudTrail Lake to audit your Agents. Every API call made by the Agent (via the assumed IAM role) is logged. Create a QuickSight dashboard to visualize “Agent Remediation Success Rates” vs. “Human Intervention Required.”

Frequently Asked Questions (FAQ)

How does Agentic AI differ from AWS Lambda automation?

Lambda automation is deterministic (scripted steps). Agentic AI is probabilistic and reasoning-based. It can handle ambiguity, such as deciding not to act if a threat looks like a false positive based on cross-referencing logs, whereas a script would execute blindly.

Is it safe to let AI modify security groups automatically?

It is safe if scoped correctly using IAM Roles. The Agent’s role should adhere to the Principle of Least Privilege. Start with “Read-Only” agents that only perform forensics and suggest remediation, then graduate to “Active” agents for low-risk environments.

Which AWS services are required for this architecture?

At a minimum: Amazon Bedrock (Agents & Knowledge Bases), AWS Lambda (Action Groups), Amazon EventBridge (Triggers), Amazon GuardDuty (Detection), and AWS Security Hub (Centralized Management).

Conclusion

The landscape of AWS Security Incident Response is shifting. By adopting Agentic AI, organizations can reduce Mean Time to Respond (MTTR) from hours to seconds. However, this is not a “set and forget” solution. It requires rigorous engineering of prompts, action schemas, and IAM boundaries.

Start small: Build an agent that purely performs automated forensics—gathering logs, querying configurations, and summarizing the blast radius—before letting it touch your infrastructure. The future of cloud security is autonomous, and the architects who master these agents today will define the standards of tomorrow.

For deeper reading on configuring Bedrock Agents, consult the official AWS Bedrock User Guide or review the AWS Security Incident Response Guide.